admin: First posted on 2016 12 02

I have never made as many mistakes preparing a piece of software as I did with the Orinj phase oscilloscope. Some were understandable, some were just not that smart.

A stylized oscilloscope

A phase oscilloscope is described in our Wiki. Note how the description focuses on the simplest signals – those consisting of a simple wave, such as a single sine or a cosine wave. These types of simple signals in properly designed oscilloscopes produce nice curves (Lissajou curves). Anything more complex than that and the beautiful Lissajou curves disintegrate into complex blotches.

My first inclination, of course, was to produce nice looking curves. After all, what we care about in a phase oscilloscope is that we would be able to discern differences in the amplitude, frequency, and phase between the left and right channel in a stereo recording. Trying to synthesize a simple single sine or cosine wave from a complex signal, however, is just not smart. You cannot simplify the content of a complex signal without losing important information.

The next natural step was to decompose the actual left and right signals into their Fourier transform components. We could then take the resulting components (amplitudes, frequencies, phases) and reconstruct the signal for the purposes of drawing the oscilloscope (a scatterplot of the left and right signal, one for the x axis and one for the y axis). This, as it turns out, is equally pointless. Why should we decompose and reconstruct the signal, when we have the actual signal? We could just draw the actual signal.

There are actually a couple of benefits to decomposing and reconstructing the signal.

- First, we can take a small transform – say a 100 points – but rather than drawing the reconstructed signal for a 100 points, we can draw it for 1000 points, making more complete, still symmetric or periodic curves. The alternative would be to get 1000 points of the original signal, which will not produce such nice curves. Or you could take 100 points and repeat them for a periodic signal, but the result will not be very smooth.

- Second, if you take a small Fourier transform of a signal, especially a signal with lower frequencies, you may end up with a sizeable DC component in the transform. This will push the oscilloscope graph in one of the corners. When reconstructing the signal, you can center the oscilloscope graph by simply ignoring the DC component.

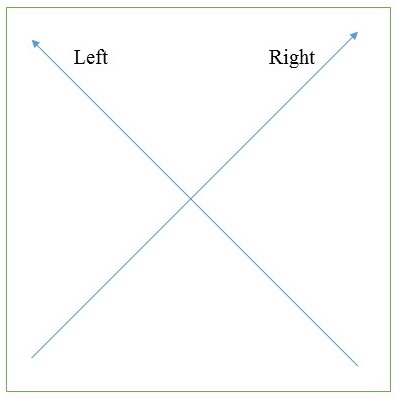

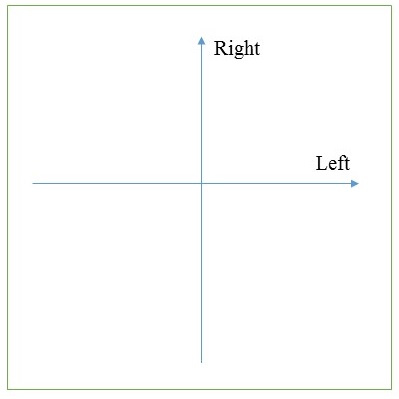

The phase oscilloscope orientation

It is always better to get an oscilloscope that looks like the following

than one that looks like the following.

In the first oscilloscope, left is left and right is right. A signal, in which the two channels are the same, will be centered in the middle as a vertical line. This line will near the left or right axis when the amplitude of the left or right channel respectively becomes larger than the amplitude of the other channel.

However, producing a graph that is essentially rotated by 45 degrees is a computational nightmare, especially if your plot is not exactly square. Thus, half a day was wasted fixing layouts to ensure that: a) the graph is correct; and b) the plot is square.

A real time phase oscilloscope

As it turns out, the Orinj effect framework does not support real time signal monitoring. This makes sense. Orinj effects are designed to process the signal before the signal is sent to output for playback. These effects operate in "real time", in the sense that the sound data is not pre-processed and stored on the hard disk, but the original sound data is read from the hard disk, processed through the effects, and sent for playback at the time of playback. However, there is still a delay between the time an effect looks at a piece of sound data and the time the piece of data is sent to output. If the Orinj sound buffers are large, this delay can also be large. The Orinj sound buffers can be several milliseconds or several seconds long.

The phase oscilloscope that we are putting together now operates in pseudo real time. The effect itself processes sound data and stores information just as any other effect does. The oscilloscope graph itself pulls these data at specific intervals, approximately at the time when the actual sound piece displayed is also being played. These two software parts, however, are somewhat independent. And they cannot be properly synchronized, as the Orinj effect framework does not allow access to the playback time.

This means that the oscilloscope graph may occasionally display data before the corresponding playback, or may occasionally wait for data, getting stuck at the same image. We can modify the Orinj effect framework to allow access to the playback time, but that seems like an overkill. After all, nobody should be interested in the phase oscilloscope display at precise points in time.

The Fourier transform

There is no excuse for these mistakes, but here they are:

- I managed to confuse the use of the phase computed with the transform – is it cos(2 π f (t – τ) for some phase τ or is it cos(2 π f t – θ) for some phase θ? It is the second.

- I remembered that the transform of a real signal produces redundant information. The second half of the component amplitudes and phase are the same as those of the first half. I tried to ignore the second half. You cannot ignore the second half when reconstructing the signal.

What now?

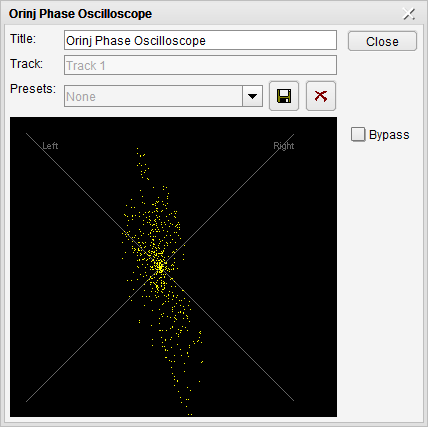

The phase oscilloscope is almost ready. An (preliminary) example of it is below (on a signal of two completely different left and right channels).

The only good thing about this waste of time is that most of the code used for the phase oscilloscope can also be used in the future Orinj spectrogram.

authors: mic

Add new comment